Kling AI created Motion AI, which is a cutting-edge tool using artificial intelligence. Motion AI specializes in the creation, control, and editing of motion in digital content. Automation motion control workflows using AI are far more innovative than traditional animation techniques. Motion AI simplifies complex settings. Using spatial relationships, timing, and physics-simulated behavior, Motion AI augments more smoothly and naturally than traditional tools.

Motion AI is a smart motion control engine by Kling AI. Every movement doesn't have to be keyframed by animators. Users can set motion intent (like how a camera moves, what it tracks, how characters interact, etc.), and the Motion AI will generate accurate, fluid motion. This innovative approach to animation and design will reduce the time needed in production while achieving the same scope and quality level goals in design. This makes motion design and animation accessible for all levels.

Motion AI can edit almost anything in the animation. You can control the speed and intensity of the animation. You can also remove parts of the animation and still keep the integrity of the entire sequence. AI will rebuild the sequence to keep a fluid motion and will integrate a natural feel to the mechanical absences. There is nothing more relieved than the camera shots in a cinematic. Adjusting the AI to these shots relieves us from the mechanical feel in the sequences.

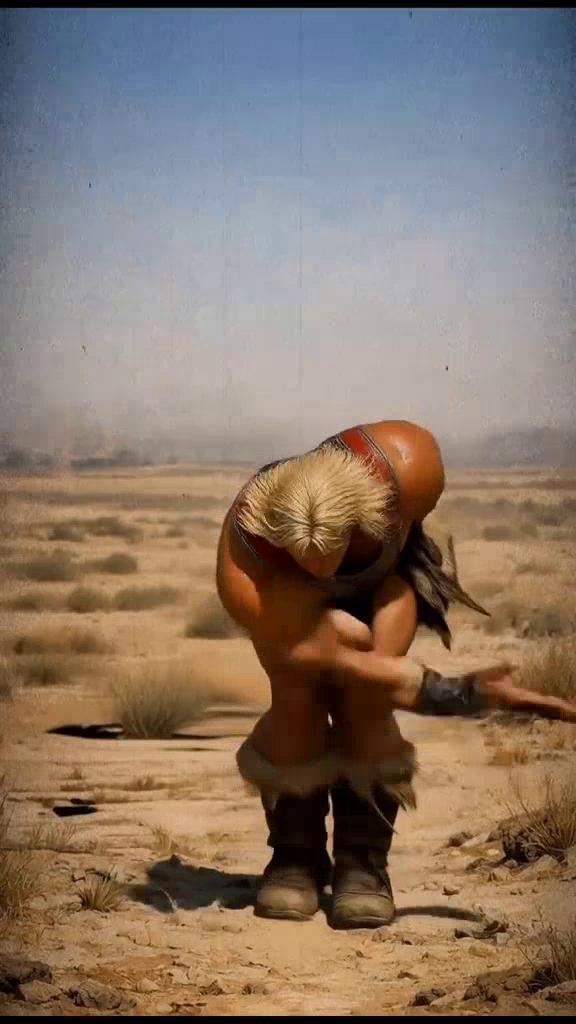

Kling 2.6 AI transforms photographs or artworks into images with movement. Motion Control captures real movement from a video and uses it to make images move in a realistic, believable way. Rather than spending dull hours creating keyframes or using motion capture software and hardware, you can simply provide a video of the motion you wish to replicate, and the AI will do the rest for you.

The technology operates by studying the motion of the characters in every frame, capturing the movements of joints, the positions of limbs, the timing, and the flow of the movement overall. This data is preserved and mapped onto the target image or character. Kling 2.6 also includes a variety of orientation settings that allow the user to customize whether the animation should process the movement in the same direction as the camera used in the reference video, if the movement should adjust along with the original image, and if the composition of the image should remain the same.

Generating smooth and clean animations that don't have any skips or loops is one of Motion Control's biggest selling points and makes it perfect for creating engaging social media videos, cinematic video content, or promotional videos that have to look good. With smart motion extraction, flexible positioning, and great ease of use, Kling 2.6 Motion Control gives the power to video creators to make great quality animated videos in virtually no time, even if they have little to no animation experience.